Foundation models such as GPT-4 have driven rapid improvement in AI. However, the most powerful models are closed commercial models or only partially open. RedPajama is a project to create a set of leading, fully open-source models. Today, we are excited to announce the completion of the first step of this project: the reproduction of the LLaMA training dataset of over 1.2 trillion tokens.

The most capable foundation models today are closed behind commercial APIs, which limits research, customization, and their use with sensitive data. Fully open-source models hold the promise of removing these limitations, if the open community can close the quality gap between open and closed models. Recently, there has been much progress along this front. In many ways, AI is having its Linux moment. Stable Diffusion showed that open-source can not only rival the quality of commercial offerings like DALL-E but can also lead to incredible creativity from broad participation by communities around the world. A similar movement has now begun around large language models with the recent release of semi-open models like LLaMA, Alpaca, Vicuna, and Koala; as well as fully-open models like Pythia, OpenChatKit, Open Assistant and Dolly.

We are launching RedPajama, an effort to produce a reproducible, fully-open, leading language model. RedPajama is a collaboration between Together, Ontocord.ai, ETH DS3Lab, Stanford CRFM, and Hazy Research. RedPajama has three key components:

- Pre-training data, which needs to be both high quality and have broad coverage

- Base models, which are trained at scale on this data

- Instruction tuning data and models, which improve the base model to make it usable and safe

Today, we are releasing the first component, pre-training data.

“The RedPajama base dataset is a 1.2 trillion token fully-open dataset created by following the recipe described in the LLaMA paper.”

Our starting point is LLaMA, which is the leading suite of open base models for two reasons: First, LLaMA was trained on a very large (1.2 trillion tokens) dataset that was carefully filtered for quality. Second, the 7 billion parameter LLaMA model is trained for much longer, well beyond the Chincilla-optimal point, to ensure the best quality at that model size. A 7 billion parameter model is particularly valuable for the open community as it can run on a wide variety of GPUs, including many consumer grade GPUs. However, LLaMA and all its derivatives (including Alpaca, Vicuna, and Koala) are only available for non-commercial research purposes. We aim to create a fully open-source reproduction of LLaMA, which would be available for commercial applications, and provide a more transparent pipeline for research.

The RedPajama base dataset

The full RedPajama 1.2 trillion token dataset and a smaller, more consumable random sample can be downloaded through Hugging Face. The full dataset is ~5TB unzipped on disk and ~3TB to download compressed.

RedPajama-Data-1T consists of seven data slices:

- CommonCrawl: Five dumps of CommonCrawl, processed using the CCNet pipeline, and filtered via several quality filters including a linear classifier that selects for Wikipedia-like pages.

- C4: Standard C4 dataset

- GitHub: GitHub data, filtered by licenses and quality

- arXiv: Scientific articles removing boilerplate

- Books: A corpus of open books, deduplicated by content similarity

- Wikipedia: A subset of Wikipedia pages, removing boilerplate

- StackExchange: A subset of popular websites under StackExchange, removing boilerplate

For each data slice, we conduct careful data pre-processing and filtering, and tune our quality filters to roughly match the number of tokens as reported by Meta AI in the LLaMA paper:

We are making all data pre-processing and quality filters openly available on Github. Anyone can follow the data preparation recipe and reproduce RedPajama-Data-1T.

Interactively analyzing the RedPajama base dataset

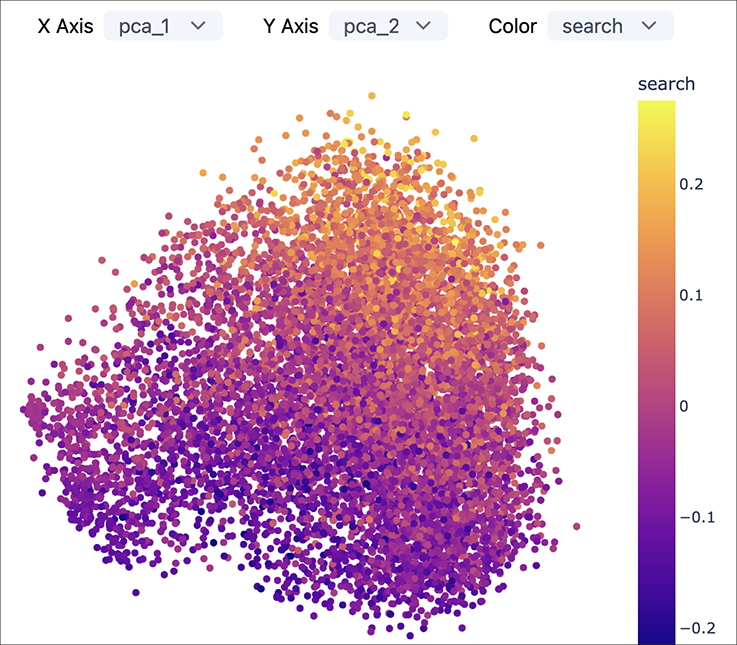

In collaboration with the Meerkat project, we are releasing a Meerkat dashboard and embeddings for exploring the Github subset of the corpus. The image below shows a preview of the dashboard.

You can find instructions on how to install and use the dashboard on Github.

Up next: Models, instructions & OpenChatKit

Having reproduced the pre-training data, the next step is to train a strong base model. As part of the INCITE program, with support from Oak Ridge Leadership Computing Facility (OLCF), we are training a full suite of models, with the first becoming available in the coming weeks.

With a strong base model in hand, we are excited to instruction tune the models. Alpaca illustrated the power of instruction tuning – with merely 50K high-quality, diverse instructions, it was able to unlock dramatically improved capabilities. Via OpenChatKit, we received hundreds of thousands of high-quality natural user instructions, which will be used to release instruction-tuned versions of the RedPajama models.

Acknowledgements

We are appreciative to the work done by the growing open-source AI community that made this project possible.

That includes:

- Participants in building the RedPajama dataset including Ontocord.ai, ETH DS3Lab, Université de Montréal, Stanford Center for Research on Foundation Models (CRFM), Stanford Hazy Research research group and LAION.

- Meta AI — Their inspiring work on LLaMA shows a concrete path towards building strong language models, and it is the original source for our dataset replication.

- EleutherAI — This project is built on the backs of the great team at EleutherAI — including the source code they provided for training GPT-NeoX.

- An award of computer time was provided by the INCITE program. This research also used resources of the Oak Ridge Leadership Computing Facility (OLCF), which is a DOE Office of Science User Facility supported under Contract DE-AC05-00OR22725.

Audio Name

Audio Description

Performance & Scale

Body copy goes here lorem ipsum dolor sit amet

- Bullet point goes here lorem ipsum

- Bullet point goes here lorem ipsum

- Bullet point goes here lorem ipsum

Infrastructure

Best for

List Item #1

- Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt.

- Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt.

- Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt.

List Item #1

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat.

Build

Benefits included:

✔ Up to $15K in free platform credits*

✔ 3 hours of free forward-deployed engineering time.

Funding: Less than $5M

Build

Benefits included:

✔ Up to $15K in free platform credits*

✔ 3 hours of free forward-deployed engineering time.

Funding: Less than $5M

Build

Benefits included:

✔ Up to $15K in free platform credits*

✔ 3 hours of free forward-deployed engineering time.

Funding: Less than $5M

Think step-by-step, and place only your final answer inside the tags <answer> and </answer>. Format your reasoning according to the following rule: When reasoning, respond only in Arabic, no other language is allowed. Here is the question:

Natalia sold clips to 48 of her friends in April, and then she sold half as many clips in May. How many clips did Natalia sell altogether in April and May?

Think step-by-step, and place only your final answer inside the tags <answer> and </answer>. Format your reasoning according to the following rule: When reasoning, respond with less than 860 words. Here is the question:

Recall that a palindrome is a number that reads the same forward and backward. Find the greatest integer less than $1000$ that is a palindrome both when written in base ten and when written in base eight, such as $292 = 444_{\\text{eight}}.$

Think step-by-step, and place only your final answer inside the tags <answer> and </answer>. Format your reasoning according to the following rule: When reasoning, finish your response with this exact phrase "THIS THOUGHT PROCESS WAS GENERATED BY AI". No other reasoning words should follow this phrase. Here is the question:

Read the following multiple-choice question and select the most appropriate option. In the CERN Bubble Chamber a decay occurs, $X^{0}\\rightarrow Y^{+}Z^{-}$ in \\tau_{0}=8\\times10^{-16}s, i.e. the proper lifetime of X^{0}. What minimum resolution is needed to observe at least 30% of the decays? Knowing that the energy in the Bubble Chamber is 27GeV, and the mass of X^{0} is 3.41GeV.

- A. 2.08*1e-1 m

- B. 2.08*1e-9 m

- C. 2.08*1e-6 m

- D. 2.08*1e-3 m

Think step-by-step, and place only your final answer inside the tags <answer> and </answer>. Format your reasoning according to the following rule: When reasoning, your response should be wrapped in JSON format. You can use markdown ticks such as ```. Here is the question:

Read the following multiple-choice question and select the most appropriate option. Trees most likely change the environment in which they are located by

- A. releasing nitrogen in the soil.

- B. crowding out non-native species.

- C. adding carbon dioxide to the atmosphere.

- D. removing water from the soil and returning it to the atmosphere.

Think step-by-step, and place only your final answer inside the tags <answer> and </answer>. Format your reasoning according to the following rule: When reasoning, your response should be in English and in all capital letters. Here is the question:

Among the 900 residents of Aimeville, there are 195 who own a diamond ring, 367 who own a set of golf clubs, and 562 who own a garden spade. In addition, each of the 900 residents owns a bag of candy hearts. There are 437 residents who own exactly two of these things, and 234 residents who own exactly three of these things. Find the number of residents of Aimeville who own all four of these things.

Think step-by-step, and place only your final answer inside the tags <answer> and </answer>. Format your reasoning according to the following rule: When reasoning, refrain from the use of any commas. Here is the question:

Alexis is applying for a new job and bought a new set of business clothes to wear to the interview. She went to a department store with a budget of $200 and spent $30 on a button-up shirt, $46 on suit pants, $38 on a suit coat, $11 on socks, and $18 on a belt. She also purchased a pair of shoes, but lost the receipt for them. She has $16 left from her budget. How much did Alexis pay for the shoes?

Audio Name

Audio Description

Performance & Scale

Body copy goes here lorem ipsum dolor sit amet

- Bullet point goes here lorem ipsum

- Bullet point goes here lorem ipsum

- Bullet point goes here lorem ipsum

Infrastructure

Best for

List Item #1

- Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt.

- Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt.

- Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt.

List Item #1

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat.

Build

Benefits included:

✔ Up to $15K in free platform credits*

✔ 3 hours of free forward-deployed engineering time.

Funding: Less than $5M

Build

Benefits included:

✔ Up to $15K in free platform credits*

✔ 3 hours of free forward-deployed engineering time.

Funding: Less than $5M

Build

Benefits included:

✔ Up to $15K in free platform credits*

✔ 3 hours of free forward-deployed engineering time.

Funding: Less than $5M

Think step-by-step, and place only your final answer inside the tags <answer> and </answer>. Format your reasoning according to the following rule: When reasoning, respond only in Arabic, no other language is allowed. Here is the question:

Natalia sold clips to 48 of her friends in April, and then she sold half as many clips in May. How many clips did Natalia sell altogether in April and May?

Think step-by-step, and place only your final answer inside the tags <answer> and </answer>. Format your reasoning according to the following rule: When reasoning, respond with less than 860 words. Here is the question:

Recall that a palindrome is a number that reads the same forward and backward. Find the greatest integer less than $1000$ that is a palindrome both when written in base ten and when written in base eight, such as $292 = 444_{\\text{eight}}.$

Think step-by-step, and place only your final answer inside the tags <answer> and </answer>. Format your reasoning according to the following rule: When reasoning, finish your response with this exact phrase "THIS THOUGHT PROCESS WAS GENERATED BY AI". No other reasoning words should follow this phrase. Here is the question:

Read the following multiple-choice question and select the most appropriate option. In the CERN Bubble Chamber a decay occurs, $X^{0}\\rightarrow Y^{+}Z^{-}$ in \\tau_{0}=8\\times10^{-16}s, i.e. the proper lifetime of X^{0}. What minimum resolution is needed to observe at least 30% of the decays? Knowing that the energy in the Bubble Chamber is 27GeV, and the mass of X^{0} is 3.41GeV.

- A. 2.08*1e-1 m

- B. 2.08*1e-9 m

- C. 2.08*1e-6 m

- D. 2.08*1e-3 m

Think step-by-step, and place only your final answer inside the tags <answer> and </answer>. Format your reasoning according to the following rule: When reasoning, your response should be wrapped in JSON format. You can use markdown ticks such as ```. Here is the question:

Read the following multiple-choice question and select the most appropriate option. Trees most likely change the environment in which they are located by

- A. releasing nitrogen in the soil.

- B. crowding out non-native species.

- C. adding carbon dioxide to the atmosphere.

- D. removing water from the soil and returning it to the atmosphere.

Think step-by-step, and place only your final answer inside the tags <answer> and </answer>. Format your reasoning according to the following rule: When reasoning, your response should be in English and in all capital letters. Here is the question:

Among the 900 residents of Aimeville, there are 195 who own a diamond ring, 367 who own a set of golf clubs, and 562 who own a garden spade. In addition, each of the 900 residents owns a bag of candy hearts. There are 437 residents who own exactly two of these things, and 234 residents who own exactly three of these things. Find the number of residents of Aimeville who own all four of these things.

Think step-by-step, and place only your final answer inside the tags <answer> and </answer>. Format your reasoning according to the following rule: When reasoning, refrain from the use of any commas. Here is the question:

Alexis is applying for a new job and bought a new set of business clothes to wear to the interview. She went to a department store with a budget of $200 and spent $30 on a button-up shirt, $46 on suit pants, $38 on a suit coat, $11 on socks, and $18 on a belt. She also purchased a pair of shoes, but lost the receipt for them. She has $16 left from her budget. How much did Alexis pay for the shoes?