Fine-Tuning

Fine-tune open-source models for real production use

Improve accuracy, reduce hallucinations, and control behavior — without managing training infrastructure.

Why fine-tune models with Together AI?

Build models that are faster, more accurate, and fully yours

Reliable infrastructure at any scale

Multi-node orchestration that eliminates job failures. Fine-tune 100B+ models (DeepSeek-V3, Qwen3-235B) that break other platforms, with the reliability to experiment rapidly.

Research-driven performance gains

ML systems research built into every job. Train with 2-4x longer contexts at no extra cost, advanced DPO variants from SOTA recipes, and continuous optimizations that make your runs faster over time.

Universal model compatibility

Fine-tune any open-source model from Hugging Face Hub. No vendor lock-in, no format conversions — seamless integration with your existing workflows.

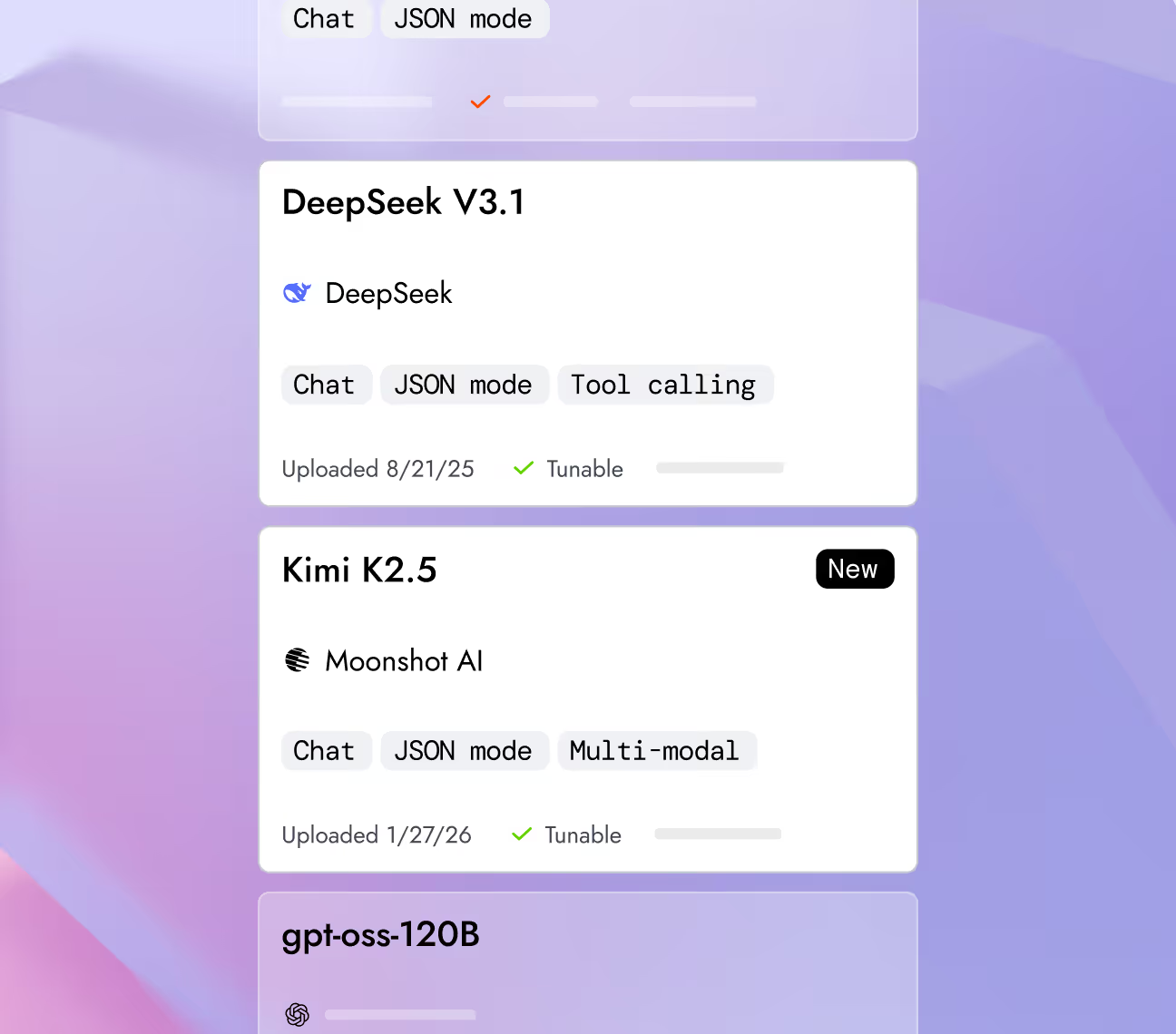

Fine-tune leading models

Explore top-performing models across text, image, video, code, and voice.

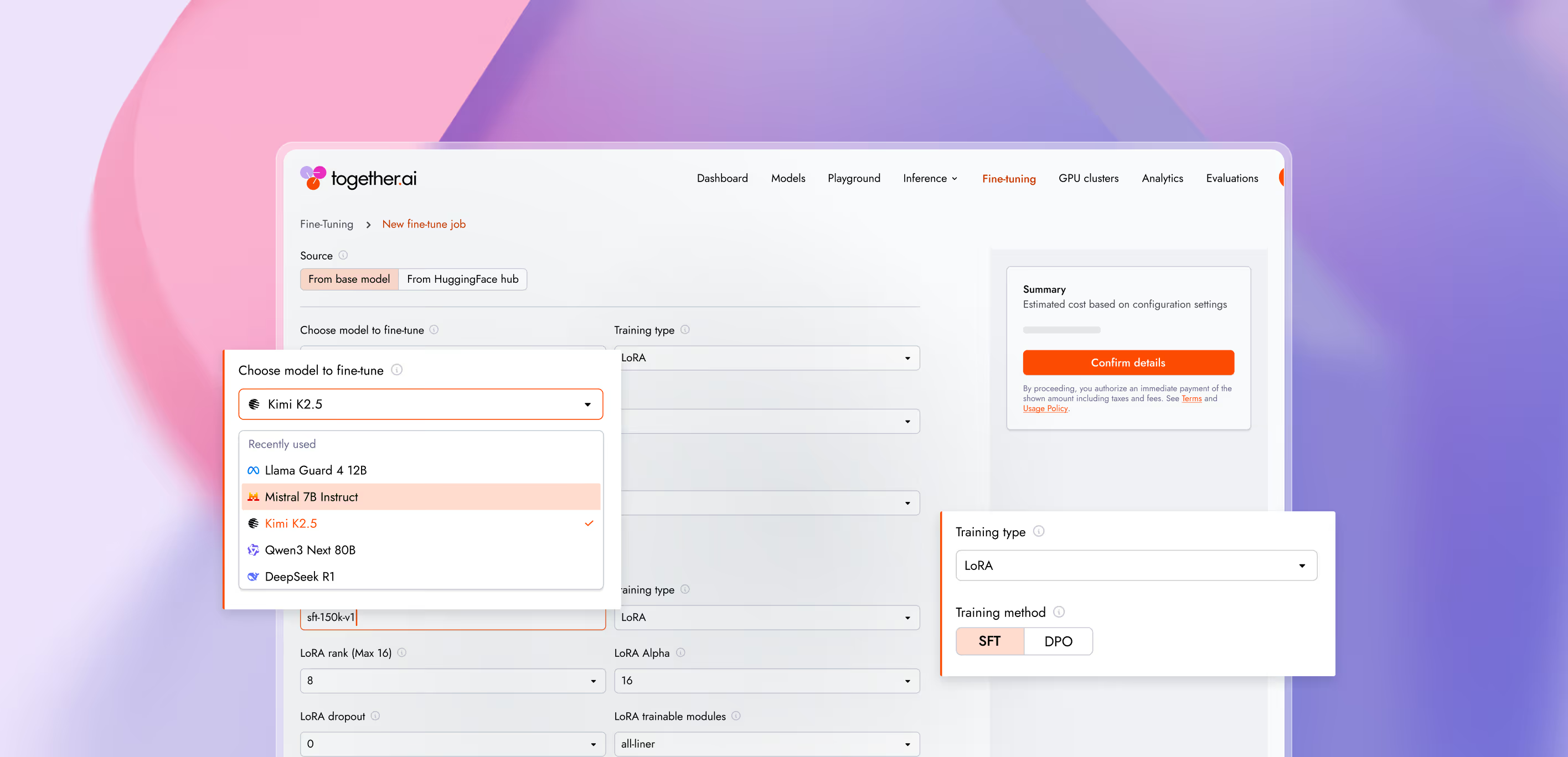

Fine-tuning options

Choose how fine-tuned models are trained and hosted based on dataset size, cost, and control.

- LoRA fine-tuning

Lightweight fine-tuning for fast iteration and lower cost.

Best forSmall to medium datasetsFast training & deploymentEasy to update or roll backGet started - Full fine-tuning

Train the entire model for maximum control and quality.

Best forLarge or complex datasetsDeeper behavior changesDedicated infrastructureGet started

Everything you need to fine-tune at scale

Fine-tune any open-source model on your data. Deploy securely onto scalable infrastructure.

Fine-tune large open-source models like Kimi-K2 and GLM-4.7 for tool use, reasoning, and agentic tasks. Drive advanced model behavior through a single API without managing underlying training infrastructure.

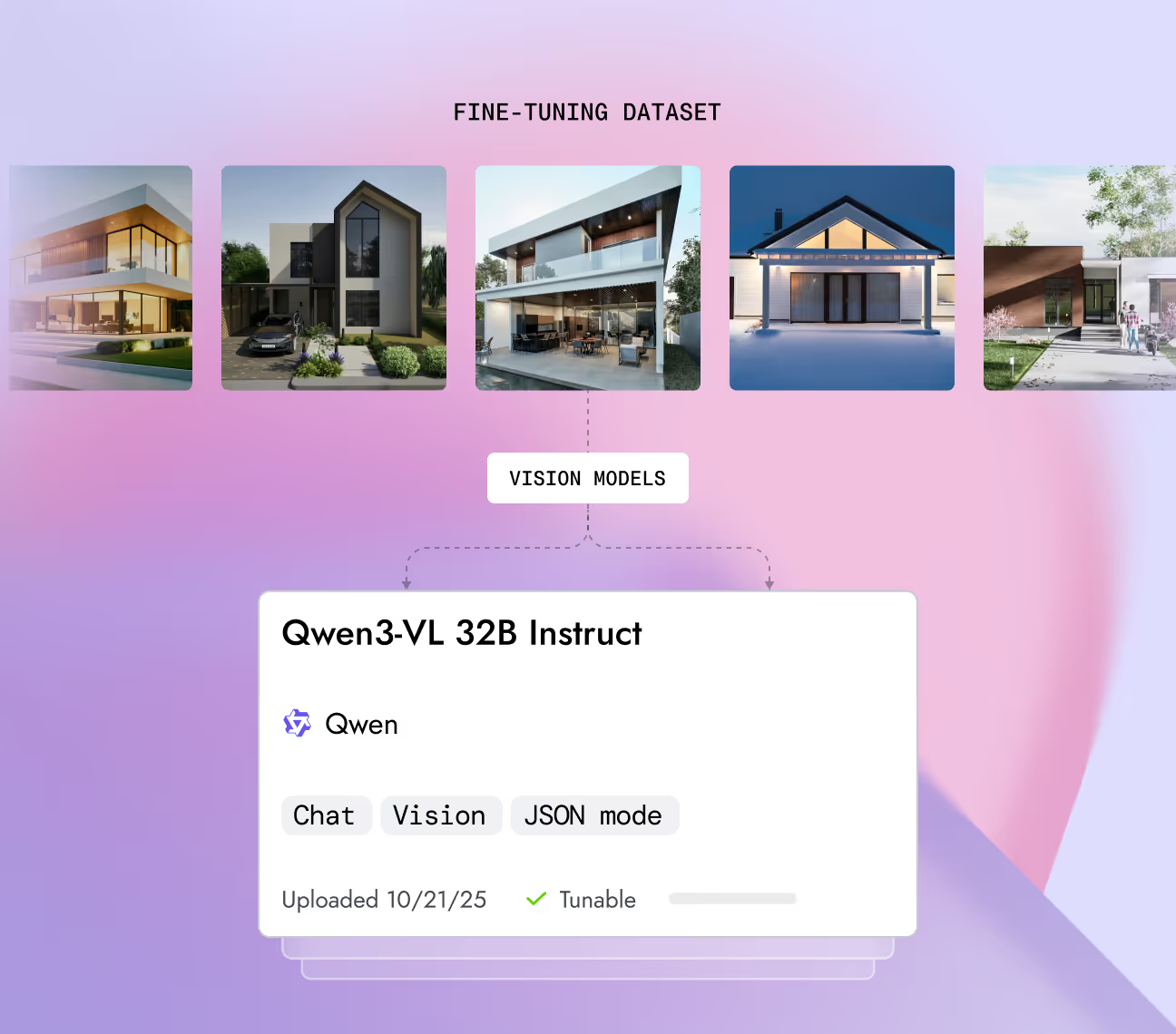

Train vision models directly on raw image data without format changes or special preprocessing. Include images alongside text to fine-tune Llama-4, Qwen3-VL, and Gemma-3 via standard APIS.

Train models for precise tool execution by integrating function definitions and tool calls directly into datasets. Process existing agent logs as-is to improve accuracy without manually restructuring data.

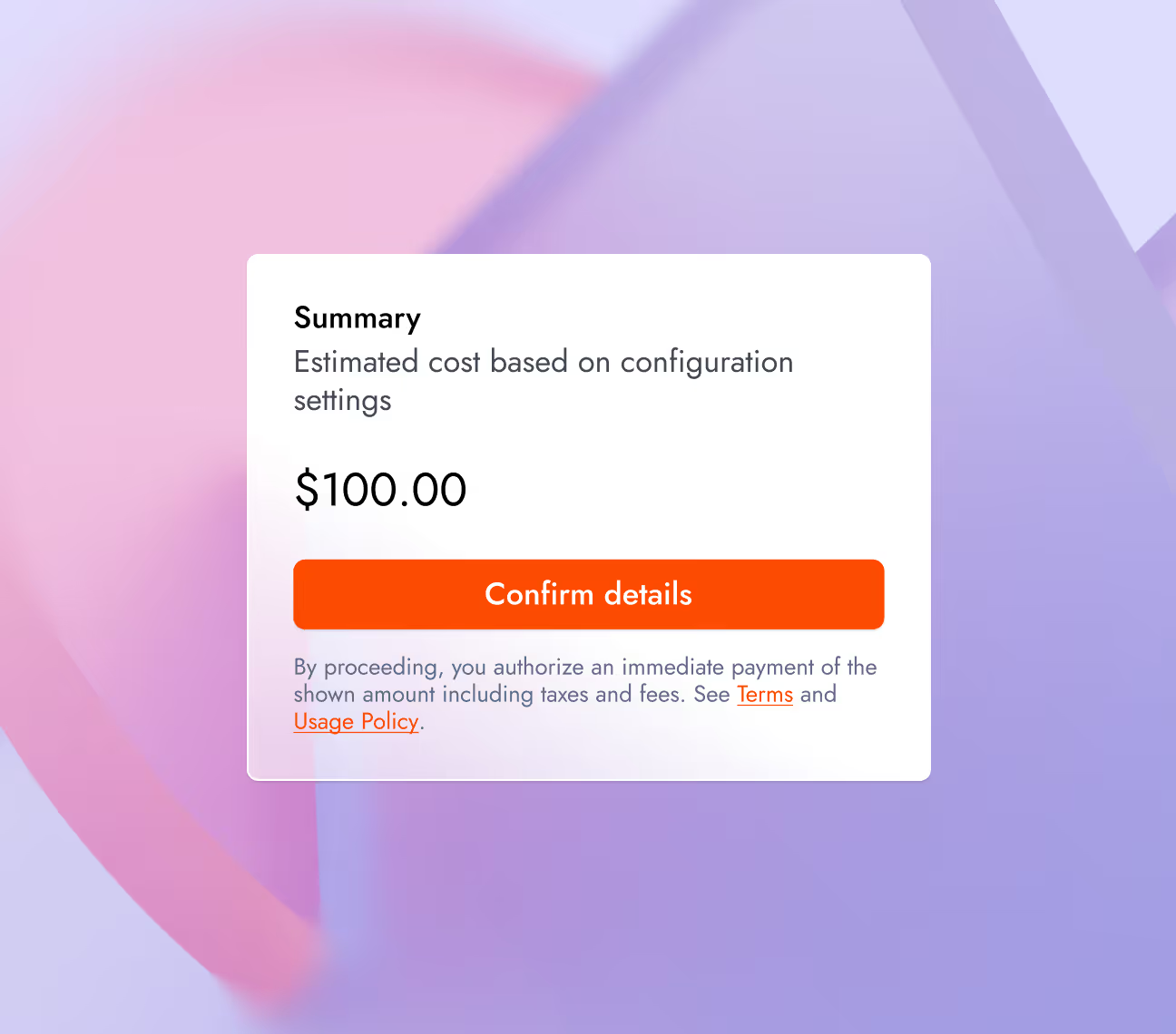

Estimate training costs before launching any job directly from the UI or CLI. Evaluate resource requirements upfront to eliminate budget surprises.

Powered by leading research

Our fine-tuning infrastructure is built on research and optimized for scale, efficiency, and production performance.

Throughput (TPS)

- Upipe

- FPDT

- ALST

UPipe vs other SOTA Approaches

82.5% less memory

Long-context training hits a memory wall at the attention layer. UPipe processes attention heads in smaller chunks, cutting peak activation memory by up to 82.5% — enabling 5M token context lengths on a single 8×H100 node.

learn moreContext parallelism approaches on long-context training

- Together AI (DCT)

- Baseline (LD)

FFT Optimizer results

25% less memory

Fine-tuning large models is memory-hungry. Our FFT-based optimizer replaces expensive SVD projections with fast Fourier transforms, reducing optimizer memory by up to 25% with no loss in training quality.

learn more

Advanced model shaping capabilities

For teams pushing models beyond standard fine-tuning

- Speculative decoding

Accelerate inference with custom speculative decoding, training lightweight draft models to predict multiple tokens

- Quantization

Apply FP8 and NVFP4 quantization to push the limits of model efficiency, maximizing hardware utilization with minimal quality loss.

- Reinforcement learning

Leverage PyTorch-based reinforcement learning to shape model policies for reasoning, tool use, and long-horizon agentic behavior.

Production-grade

security and data privacy

We take security and compliance seriously, with strict data privacy controls to keep your information protected. Your data and models remain fully under your ownership, safeguarded by robust security measures.

NVIDIA preferred partner

NVIDIA preferred partner- AICPA SOC 2 Type II