Models / Chat / Deepseek-67B

Deepseek-67B

LLM

Trained from scratch on a vast dataset of 2 trillion tokens in both English and Chinese.

Try our Deepseek-67B API

API Usage

Endpoint

deepseek-ai/deepseek-llm-67b-chat

RUN INFERENCE

curl -X POST "https://api.together.xyz/v1/chat/completions" \

-H "Authorization: Bearer $TOGETHER_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "deepseek-ai/deepseek-llm-67b-chat",

"messages": [{"role": "user", "content": "What are some fun things to do in New York?"}]

}'

JSON RESPONSE

RUN INFERENCE

from together import Together

client = Together()

response = client.chat.completions.create(

model="deepseek-ai/deepseek-llm-67b-chat",

messages=[{"role": "user", "content": "What are some fun things to do in New York?"}],

)

print(response.choices[0].message.content)

JSON RESPONSE

RUN INFERENCE

import Together from "together-ai";

const together = new Together();

const response = await together.chat.completions.create({

messages: [{"role": "user", "content": "What are some fun things to do in New York?"}],

model: "deepseek-ai/deepseek-llm-67b-chat",

});

console.log(response.choices[0].message.content)

JSON RESPONSE

Model Provider:

DeepSeek

Type:

Chat

Variant:

Parameters:

67B

Deployment:

✔ Serverless

Quantization

Context length:

4096

Pricing:

$1.20

Run in playground

Deploy model

Quickstart docs

Quickstart docs

How to use Deepseek-67B

Model details

Prompting Deepseek-67B

Applications & Use Cases

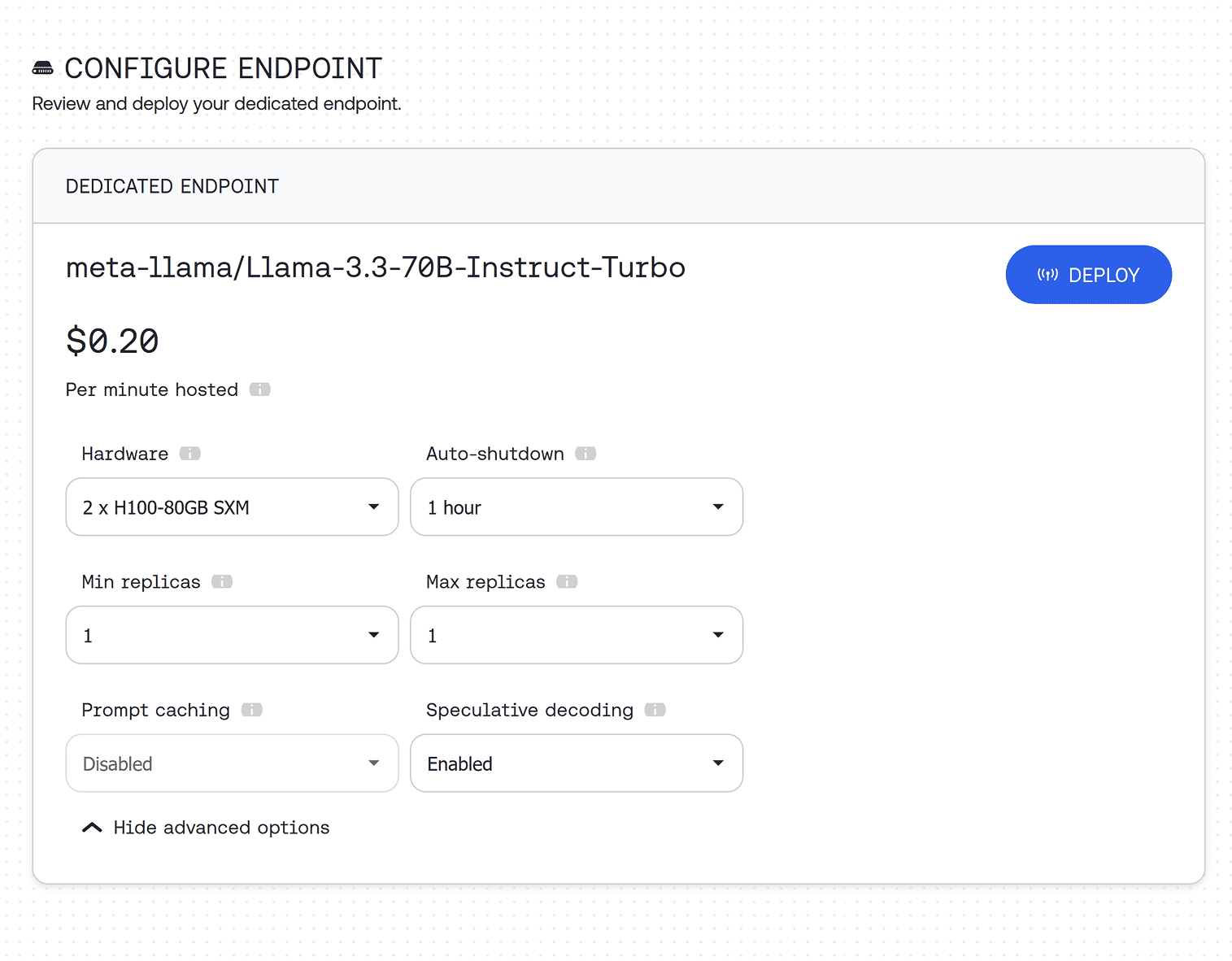

Looking for production scale? Deploy on a dedicated endpoint

Deploy Deepseek-67B on a dedicated endpoint with custom hardware configuration, as many instances as you need, and auto-scaling.