Models / Code / Devstral Small 2505 API

Devstral Small 2505 API

24B coding model by Mistral AI & All Hands AI built for advanced agentic code tasks, topping SWE-bench scores.

Devstral Small 2505 API Usage

Endpoint

RUN INFERENCE

This model is available as a Together Dedicated Endpoints deployment.

Follow our Docs to configure an endpoint via our API or CLI.JSON RESPONSE

RUN INFERENCE

This model is available as a Together Dedicated Endpoints deployment.

Follow our Docs to configure an endpoint via our API or CLI.JSON RESPONSE

RUN INFERENCE

This model is available as a Together Dedicated Endpoints deployment.

Follow our Docs to configure an endpoint via our API or CLI.JSON RESPONSE

Model Provider:

Mistral

Type:

Code

Variant:

Small

Parameters:

24B

Deployment:

✔️ Dedicated

Quantization

Context length:

128k

Pricing:

Run in playground

Deploy model

Quickstart docs

Quickstart docs

How to use Devstral Small 2505

Model details

Devstral is an agentic LLM for software engineering tasks built under a collaboration between Mistral AI and All Hands AI 🙌. Devstral excels at using tools to explore codebases, editing multiple files and power software engineering agents. The model achieves remarkable performance on SWE-bench which positionates it as the #1 open source model on this benchmark.

It is finetuned from Mistral-Small-3.1, therefore it has a long context window of up to 128k tokens. As a coding agent, Devstral is text-only and before fine-tuning from Mistral-Small-3.1 the vision encoder was removed.

For enterprises requiring specialized capabilities (increased context, domain-specific knowledge, etc.), we will release commercial models beyond what Mistral AI contributes to the community.

Learn more about Devstral in this blog post.

Key Features

- Agentic coding: Devstral is designed to excel at agentic coding tasks, making it a great choice for software engineering agents.

- lightweight: with its compact size of just 24 billion parameters, Devstral is light enough to run on a single RTX 4090 or a Mac with 32GB RAM, making it an appropriate model for local deployment and on-device use.

- Apache 2.0 License: Open license allowing usage and modification for both commercial and non-commercial purposes.

- Context Window: A 128k context window.

- Tokenizer: Utilizes a Tekken tokenizer with a 131k vocabulary size.

Benchmark Results

SWE-Bench

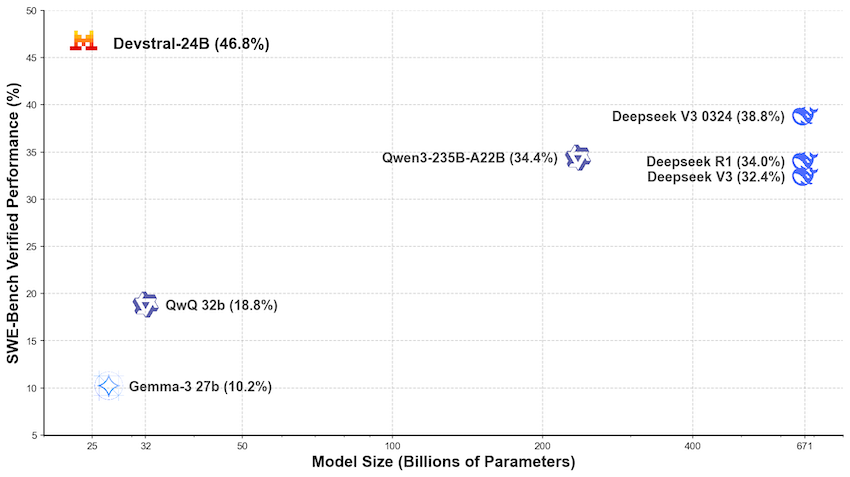

Devstral achieves a score of 46.8% on SWE-Bench Verified, outperforming prior open-source SoTA by 6%.

| Model | Scaffold | SWE-Bench Verified (%) |

|---|---|---|

| Devstral | OpenHands Scaffold | 46.8 |

| GPT-4.1-mini | OpenAI Scaffold | 23.6 |

| Claude 3.5 Haiku | Anthropic Scaffold | 40.6 |

| SWE-smith-LM 32B | SWE-agent Scaffold | 40.2 |

When evaluated under the same test scaffold (OpenHands, provided by All Hands AI 🙌), Devstral exceeds far larger models such as Deepseek-V3-0324 and Qwen3 232B-A22B.

Prompting Devstral Small 2505

Applications & Use Cases

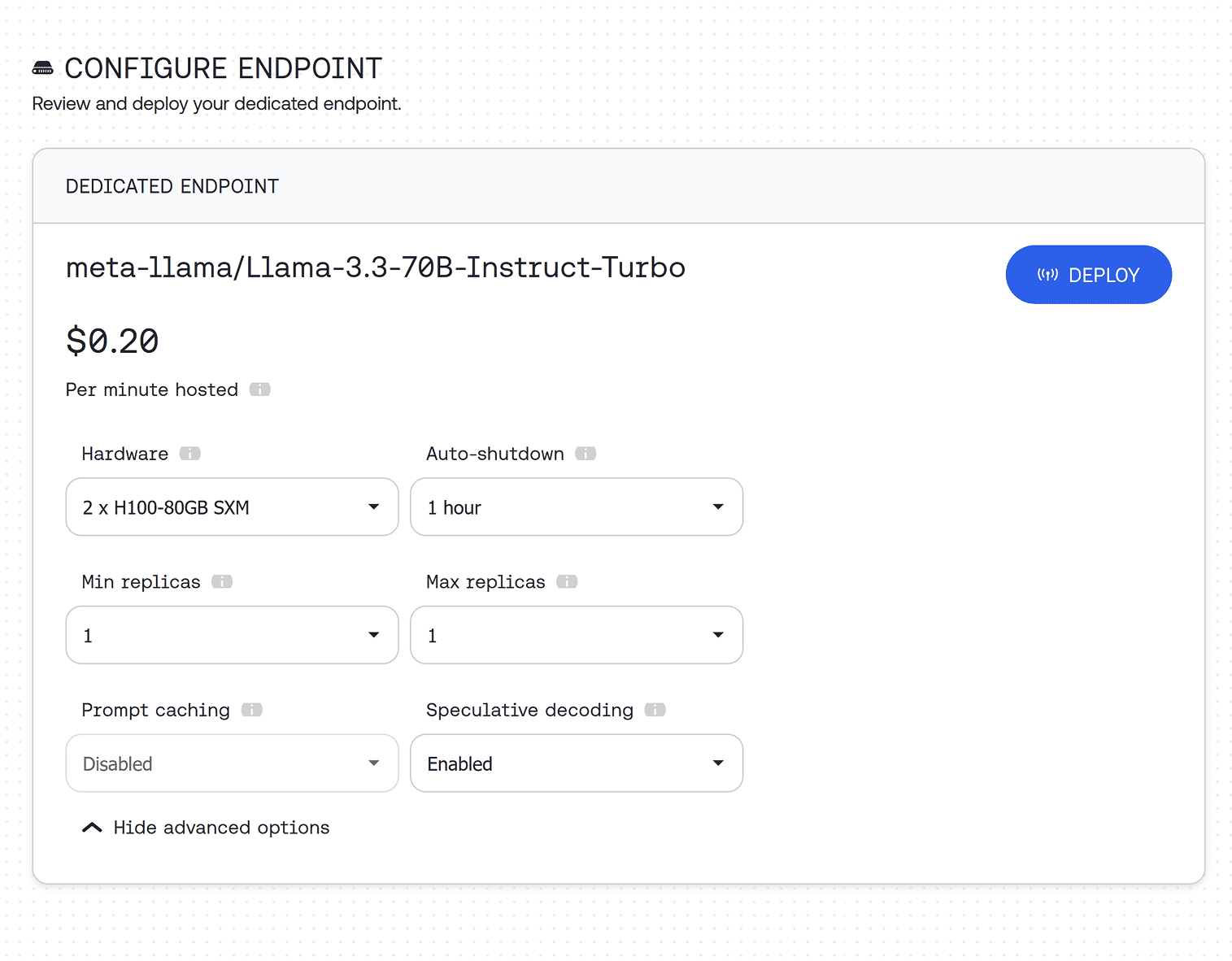

Looking for production scale? Deploy on a dedicated endpoint

Deploy Devstral Small 2505 on a dedicated endpoint with custom hardware configuration, as many instances as you need, and auto-scaling.